Instant AI-fication – Flexible and fun image generation

01

Task

Create (Almost) Real-Time Experiences

Generative AI often requires a significant cognitive investment. You sit down, think up a prompt, type it in, and watch eagerly as a text or image slowly takes shape in front of your eyes. This mental and temporal overhead turns an essentially magical "Wow" into a slow "Aha," which gets stuck in the rational part of your brain. Not exactly a smooth experience with instant gratification.

02

Emotionalize

Stop Typing, Waiting, Typing

Many users experience generative image AIs through meticulously crafted text prompts. However, models like Stable Diffusion and services like Midjourney increasingly offer enhanced image control through visual and stylistic references. Stable Diffusion, in particular, has gained popularity because it features its own ecosystem of control options: the ControlNet architecture.

02a

ControlNet

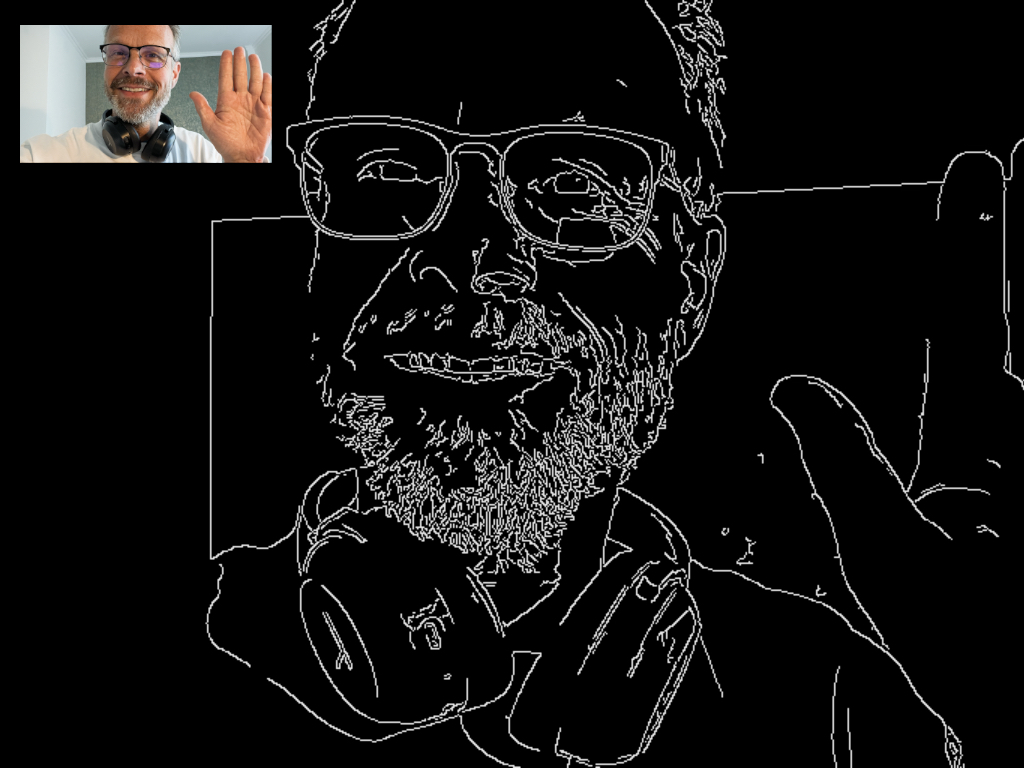

Canny Edges

There are ControlNet models with very strict guidelines. For example, *Canny Edges* transform input images into edge lines, leaving Stable Diffusion with the task of coloring within these lines accurately.

02b

ControlNet

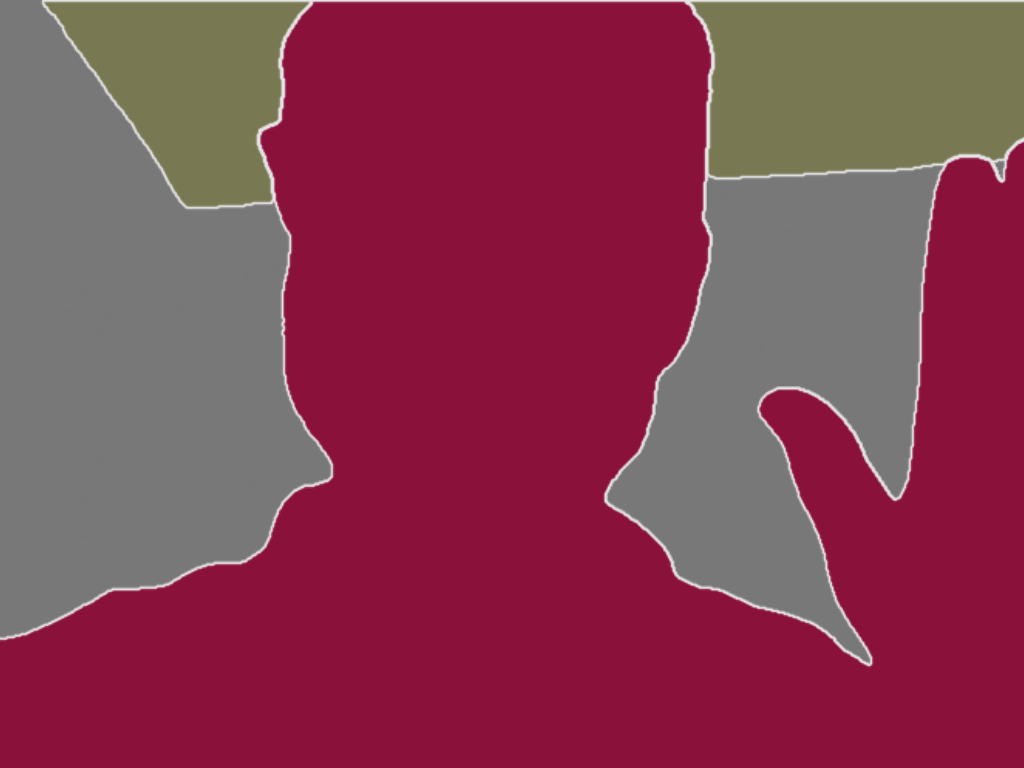

Segmentation

Other models allow for more freedom. *Segmentation* divides input images into defined units, giving Stable Diffusion more leeway in interpreting the content and aesthetics of the guidelines.

02c

ControlNet

Depth Map

However, the preferred ControlNet for a task that requires creative control and artistic freedom is *depth map*. It builds on a black-and-white input image where distances are encoded. Dark areas are far away, and light areas are close to the camera. The ControlNet model determines, based on shape and placement in three-dimensional space, where each image element is generated.

This ControlNet not only assists in generation but also in estimating depth information in regular camera images. For example, it can recognize through intensive, specialized training that a billboard in our snapshot is **in front** of the sky and **behind** a truck, and create a depth map based on this trained world knowledge. The downside is that depth determination adds a few extra seconds, which stand in the way of our goal of a near-to-real-time representation.

03

Shortcut

Speed and Accuracy with Lidar

Fortunately, depth maps are not an invention of the AI era. 3D sensors have been able to represent their measurements in black and white images for some time. The most prominent example is Lidar, which Apple has been incorporating into its iPhones for several years. It supports iOS's ARKit in spatial orientation and is fast and precise even in poor lighting conditions, delivering up to 60 depth maps per second.

Like many iOS features, Apple has deeply hidden this system gem behind regulated APIs. Luckily, access is provided by individual developers.

The [Record3d App](https://record3d.app/) not only reliably reads depth maps from the iPhone Lidar but also streams them to connected devices for further processing. Repositories with example code in Python and C++ provided by the developer jump start the work.

04

Local

Local Script as a Sender

On a laptop, the images can be adjusted to the required depth range of the ControlNet and converted for forwarding to external services. Additionally, we add a short prompt to specify what we want to transform the image into.

05

Remote

Speed in the Cloud

The most important part is ahead of us. We don't want to stare at the depth map but at an interestingly generated image with fun content. So the depth map must be translated into an artwork of our choice using Stable Diffusion and an integrated ControlNet. And it has to be done at high speed. This is where two wonderful developments of the AI era come to our aid.

06

Model

A Gen-AI Turbo

About six months ago, Stable Diffusion released the SDXL Turbo architecture. Where an image previously took 20 to 30 iterations to generate, now one or two are sufficient. This means a many-fold increase in speed from start to finished image.

To maintain as much control as possible, I integrated ControlNet, the base model, and generation into a lean, fast pipeline, avoiding the need for bulky, heavy Stable Diffusion UIs. The magical part of the pipeline (ControlNet and Gen AI) is only a few lines long:

#import stuff

depth_map = ControlNetModel.from_pretrained(

"diffusers/controlnet-depth-sdxl-1.0",

torch_dtype=torch.float16,

).to("cuda")

vae = AutoencoderKL.from_pretrained("madebyollin/sdxl-vae-fp16-fix",

torch_dtype=torch.float16)

controlnet = depth_map

pipe = StableDiffusionXLControlNetPipeline.from_pretrained(

"stabilityai/sdxl-turbo",

controlnet=controlnet,

vae=vae,

torch_dtype=torch.float16,

)

pipe.enable_model_cpu_offload()

# Define prompt and params here

output = pipe(

prompt, negative_prompt=negative_prompt, image=init_image,

num_inference_steps=num_inference_steps,

strength=strength, guidance_scale=guidance_scale,

controlnet_conditioning_scale=controlnet_conditioning_scale,

).images[0]

07

Service

GPU in the Cloud

Runpod is a service that provides storage and computing power over the internet. While it's not the only one, it has created a particularly developer-friendly and accessible setup. With a few configuration steps, you can start large machines with large GPUs at reasonable costs and shut them down instantly after use. (GPUs, as a reminder, are processor units originally developed for graphics applications. Handling many small calculations quickly and in parallel comes in handy for AI applications.)

For a Runpod instance, all you need is a library, a configuration file that defines storage size, GPU type, and required AI libraries. Plus a Python script capable of receiving input as JSON, processing it remotely, and returning the results as JSON. Nada mas.

08

Local

Local Script as a Receiver

The script on the local computer now has little work left. Once it receives the generated AI image data, which was created remotely with full computing power, it can display it as required by the interface, idea, and context.

09

Outlook

What’s next?

Let's explore the new creative playgrounds. In the next steps, I'll explore the storytelling potential. Perhaps an idea will arise from the fact that the Lidar can recognize the environment even in the dark and generate images on soemthing only it can see?